Log as generalized length

Here are a handful of examples of how the logarithm base 10 behaves. Can you spot the pattern?

Every time the input gets one digit longer, the output goes up by one. In other words, the output of the logarithm is roughly the length — measured in digits — of the input. (Why?)

Why is it the log base 10 (rather than, say, the log base 2) that roughly measures the length of a number? Because numbers are normally represented in decimal notation, where each new digit lets you write down ten times as many numbers. The logarithm base 2 would measure the length of a number if each digit only gave you the ability to write down twice as many numbers. In other words, the log base 2 of a number is roughly the length of that number when it’s represented in binary notation (where \(13\) is written \(\texttt{1101}\) and so on):

If you aren’t familiar with the idea of numbers represented in other number bases besides 10, and you want to learn more, see the number base tutorial.

Here’s an interactive visualization which shows the link between the length of a number expressed in base \(b\), and the logarithm base \(b\) of that number:

As you can see, if \(b\) is an integer greater than 1, then the logarithm base \(b\) of \(x\) is pretty close to the number of digits it takes to write \(x\) in base \(b.\)

Pretty close, but not exactly. The most obvious difference is that the outputs of logarithms generally have a fractional portion: the logarithm of \(x\) always falls a little short of the length of \(x.\) This is because, insofar as logarithms act like the “length” function, they generalize the notion of length, making it continuous.

What does this fractional portion mean? Roughly speaking, logarithms measure not only how long a number is, but also how much that number is really using its digits. 12 and 99 are both two-digit numbers, but intuitively, 12 is “barely” two digits long, whereas 97 is “nearly” three digits. Logarithms formalize this intuition, and tell us that 12 is really only using about 1.08 digits, while 97 is using about 1.99.

Where are these fractions coming from? Also, looking at the examples above, notice that \(\log_{10}(316) \approx 2.5.\) Why is it 316, rather than 500, that logarithms claim is “2.5 digits long”? What would it even mean for a number to be 2.5 digits long? It very clearly takes 3 digits to write down “316,” namely, ‘3’, ‘1’, and ‘6’. What would it mean for a number to use “half a digit”?

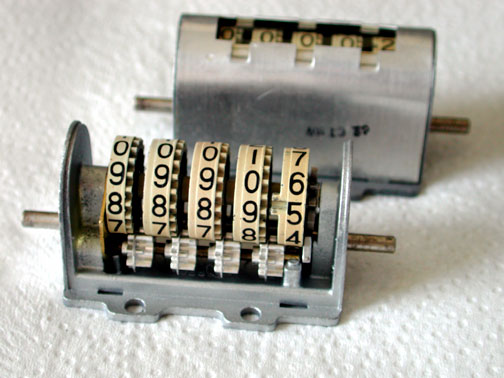

Well, here’s one way to approach the notion of a “partial digit.” Let’s say that you work in a warehouse recording data using digit wheels like they used to have on old desktop computers.

Let’s say that one of your digit wheels is broken, and can’t hold numbers greater than 4 — every notch 5-9 has been stripped off, so if you try to set it to a number between 5 and 9, it just slips down to 4. Let’s call the resulting digit a 5-digit, because it can still be stably placed into 5 different states (0-4). We could easily call this 5-digit a “partial 10-digit.”

The question is, how much of a partial 10-digit is it? Is it half a 10-digit, because it can store 5 out of 10 values that a “full 10-digit” can store? That would be a fine way to measure fractional digits, but it’s not the method used by logarithms. Why? Well, consider a scenario where you have to record lots and lots of numbers on these digits (such that you can tell someone how to read off the right data later), and let’s say also that you have to pay me one dollar for every digit that you use. Now let’s say that I only charge you 50¢ per 5-digit. Then you should do all your work in 5-digits! Why? Because two 5-digits can be used to store 25 different values (00, 01, 02, 03, 04, 10, 11, …, 44) for $1, which is way more data-stored-per-dollar than you would have gotten by buying a 10-digit.noteYou may be wondering, are two 5-digits really worth more than one 10-digit? Sure, you can place them in 25 different configurations, but how do you encode “9″ when none of the digits have a “9” symbol written on them? If so, see The symbols don’t matter.

In other words, there’s a natural exchange rate between \(n\)-digits, and a 5-digit is worth more than half a 10-digit. (The actual price you’d be willing to pay is a bit short of 70¢ per 5-digit, for reasons that we’ll explore shortly). A 4-digit is also worth a bit more than half a 10-digit (two 4-digits lets you store 16 different numbers), and a 3-digit is worth a bit less than half a 10-digit (two 3-digits let you store only 9 different numbers).

We now begin to see what the fractional answer that comes out of a logarithm actually means (and why 300 is closer to 2.5 digits long that 500 is). The logarithm base 10 of \(x\) is not answering “how many 10-digits does it take to store \(x\)?” It’s answering “how many digits-of-various-kinds does it take to store \(x\), where as many digits as possible are 10-digits; and how big does the final digit have to be?” The fractional portion of the output describes how large the final digit has to be, using this natural exchange rate between digits of different sizes.

For example, the number 200 can be stored using only two 10-digits and one 2-digit.\(\log_{10}(200) \approx 2.301,\) and a 2-digit is worth about 0.301 10-digits. In fact, a 2-digit is worth exactly \((\log_{10}(200) - 2)\) 10-digits. As another example, \(\log_{10}(500) \approx 2.7\) means “to record 500, you need two 10-digits, and also a digit worth at least \(\approx\)70¢”, i.e., two 10-digits and a 5-digit.

This raises a number of additional questions:

Question: Wait, there is no digit that’s worth 50¢. As you said, a 3-digit is worth less than half a 10-digit (because two 3-digits can only store 9 things), and a 4-digit is worth more than half a 10-digit (because two 4-digits store 16 things). If \(\log_{10}(316) \approx 2.5\) means “you need two 10-digits and a digit worth at least 50¢,” then why not just have the \(\log_{10}\) of everything between 301 and 400 be 2.60? They’re all going to need two 10-digits and a 4-digit, aren’t they?

Answer: The natural exchange rates between digits is actually way more interesting than it first appears. If you’re trying to store either “301” or “400“, and you start with two 10-digits, then you have to purchase a 4-digit in both cases. But if you start with a 10-digit and an 8-digit, then the digit you need to buy is different in the two cases. In the “301” case you can still make do with a 4-digit, because the 10, 8, and 4-digits together give you the ability to store any number up to \(10\cdot 8\cdot 4 = 320\). But in the “400” case you now need to purchase a 5-digit instead, because the 10, 8, and 4 digits together aren’t enough. The logarithm of a number tells you about every combination of \(n\)-digits that would work to encode the number (and more!). This is an idea that we’ll explore over the next few pages, and it will lead us to a much better understanding of logarithms.

Question: Hold on, where did the 2.60 number come from above? How did you know that a 5-digit costs 70¢? How are you calculating these exchange rates, and what do they mean?

Answer: Good question. In Exchange rates between digits, we’ll explore what the natural exchange rate between digits is, and why.

Question: \(\log_{10}(100)=2,\) but clearly, 100 is 3 digits long. In fact, \(\log_b(b^k)=k\) for any integers \(b\) and \(k\), but \(k+1\) digits are required to represent \(b^k\) in base \(b\) (as a one followed by \(k\) zeroes). Why is the logarithm making these off-by-one errors?

Answer: Secretly, the logarithm of \(x\) isn’t answering the question “how hard is it to write \(x\) down?”, it’s answering something more like “how many digits does it take to record a whole number less than \(x\)?” In other words, the \(\log_{10}\) of 100 is the number of 10-digits you need to be able to name any one of a hundred numbers, and that’s two digits (which can hold anything from 00 to 99).

Question: Wait, but what about when the input has a fractional portion? How long is the number 100.87? And also, \(\log_{10}(100.87249072)\) is just a hair higher than 2, but 100.87249072 is way harder to write down that 100. How can you say that their “lengths” are almost the same?

Answer: Great questions! The length interpretation on its own doesn’t shed any light on how logarithm functions handle fractional inputs. We’ll soon develop a second interpretation of logarithms which does explain the behavior on fractional inputs, but we aren’t there yet.

Meanwhile, note that the question “how hard is it to write down an integer between 0 and \(x\) using digits?” is very different from the question of “how hard is it to write down \(x\)”? For example, 3 is easy to write down using digits, while \(\pi\) is very difficult to write down using digits. Nevertheless, the log of \(\pi\) is very close to the log of 3. The concept for “how hard is this number to write down?” goes by the name of complexity; see the Kolmogorov complexity tutorial to learn more on this topic.

Question: Speaking of fractional inputs, if \(0 < x < 1\) then the logarithm of \(x\) is negative. How does that square with the length interpretation? What would it even mean for the length of the number \(\frac{1}{10}\) to be \(-1\)?

Answer: Nice catch! The length interpretation crashes and burns when the inputs are less than one.

The “logarithms measure length” interpretation is imperfect. The connection is still useful to understand, because you already have an intuition for how slowly the length of a number grows as the number gets larger. The “length” interpretation is one of the easiest ways to get a gut-level intuition for what logarithmic growth means. If someone says “the amount of time it takes to search my database is logarithmic in the number of entries,” you can get a sense for what this means by remembering that logarithmic growth is like how the length of a number grows with the magnitude of that number:

The interpretation doesn’t explain what’s going on when the input is fractional, but it’s still one of the fastest ways to make logarithms start feeling like a natural property on numbers, rather than just some esoteric function that “inverts exponentials.” Length is the quick-and-dirty intuition behind logarithms.

For example, I don’t know what the logarithm base 10 of 2,310,426 is, but I know it’s between 6 and 7, because 2,310,426 is seven digits long.

In fact, I can also tell you that \(\log_{10}(\text{2,310,426})\) is between 6.30 and 6.48. How? Well, I know it takes six 10-digits to get up to 1,000,000, and then we need something more than a 2-digit and less than a 3-digit to get to a number between 2 and 3 million. The natural exchange rates for 2-digits and 3-digits (in terms of 10-digits) are 30¢ and 48¢ respectively, so the cost of 2,310,426 in terms of 10-digits is between $6.30 and $6.48.

Next up, we’ll be exploring this idea of an exchange rate between different types of digits, and building an even better interpretation of logarithms which helps us understand what they’re doing on fractional inputs (and why).

Parents:

What’s \(n\) exactly?

I think you may need to spell out this 10 times as many numbers part. This is a large unexplained step in explaining why the log is the length.

This is slightly confusing, because it’s the first digit that’s a 2.

I might write this as, “whereas, when multiplying 1 by 10 to get to x, you might have to multiply by 10 a fractional number of times (if x is not a power of 10), so the log base 10 of x can include a fractional part while the number of digits in the base 10 representation of x is always a whole number.”

Rationale: in the previous sentence you’re comparing the number of digits needed to write x to the number of times to multiply 1 by 10. So when the next sentence starts with, “the only difference is…” I’m expecting it to be comparing numbers of digits and numbers of times to multiply. I can figure out that you’ve switched to talking about “computing logs” because logs count the number of times to multiply by 10, but it feels like one extra step of mental effort.

(This is a less confident suggestion than the amount of text I’ve used suggests.)

How about, “because I’m going to need six 10-digits to get up to a million, and something more than a 2-digit and less than a 3-digit to get from there to a number between 2 and 3 million.”

I’m not sure that would be the right way to say it, but I still feel like the current text is problematic, because:

1) Whether you say last digit, or seventh digit, in either case I’m reading right-to-left and my first thought is that you’re talking about the ones place.

2) Even if you said something like left-most digit, that wouldn’t be right, because it’s not that 2 is between 2 and 3, it’s that the value of the whole number is greater than 210^6 and less than 310^6.

I think you’re referring to a digit in an abstract sense that doesn’t directly map to the digits we write down, so you may have to go out of your way to avoid confusing nth digit with a particular one of the numerals that are written above.

Is this paragraph needed? I find myself wanting to skip past it.

You use an example of “99” then switch to “97″.